Digital zoom 2.0 on smartphones: Is a cropped "optical zoom" nonsense?

The iPhone 14 Pro has four cameras, where Apple wrote specified on the product page as the main camera, ultra-wide angle, and dual and triple zoom. However, only three cameras can be seen at the back. The solution to this seeming conundrum? A "virtual camera" with dual zoom, made possible by the 48 MP quad-pixel sensor. Is this cheeky marketing or actually useful information?

The ante continues to rise in 2022 where the insane megapixel race is concerned. After the first 108 MP smartphone hit the market at the end of 2019, the 200 MP mark was crossed this month with the Motorola Edge 30 Ultra (review) and Xiaomi 12T Pro (hands-on).

Even the earlier mentioned iPhone 14 Pro has jumped to a 48 MP camera this year, which is very unusual for Apple. However, the exciting feature of extremely high-resolution sensors is not the 100 MB photos used for prints in the house, but for their zoom.

How does the 2x zoom in the iPhone 14 Pro work?

Unlike full-blown digital cameras, the vast majority of smartphones do not have zoom lenses that can change the focal length by shifting the lenses, due to space limitations. Instead, there are separate sensors for different zoom levels. The iPhone 14 Pro has one sensor for the ultra-wide angle (0.5x), one for the wide-angle (1x), and one for the telephoto (3x).

When zooming using the two-finger gesture in the camera app, the smartphone now digitally zooms into the image of one camera until the magnification of the next camera module is reached before it makes the switch. As digital magnification increases, the quality will naturally decrease. The degree of decrease depends on the camera in question.

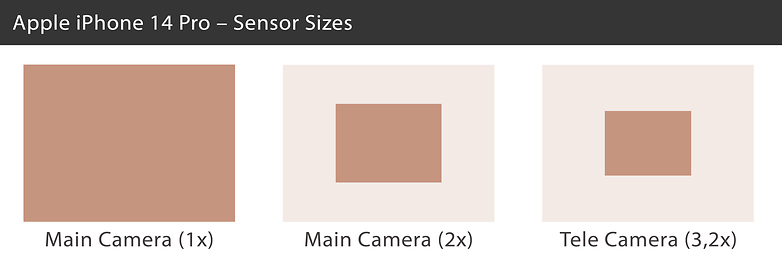

The iPhone 14 Pro's main camera is a 1/1.28-inch image sensor that measures 9.8 by 7.3 millimeters, with a 48 MP resolution. With the 2x zoom, Apple simply cuts out a 12-megapixel section from the center of the sensor, which then still measures 4.9 by 3.7 millimeters on the sensor - and thus roughly corresponds to the 1/3-inch format. That is still enough for decent photos under good lighting conditions.

At 3x zoom, Apple then switches to the next 12-megapixel sensor (which is actually more like 3.2x with an equivalent focal length of 77 millimeters). With the 1/3.5-inch format or 4.0 by 3.0 millimeters, the sensor is again a bit smaller than the "2x sensor" that was cut out of the center of the main camera.

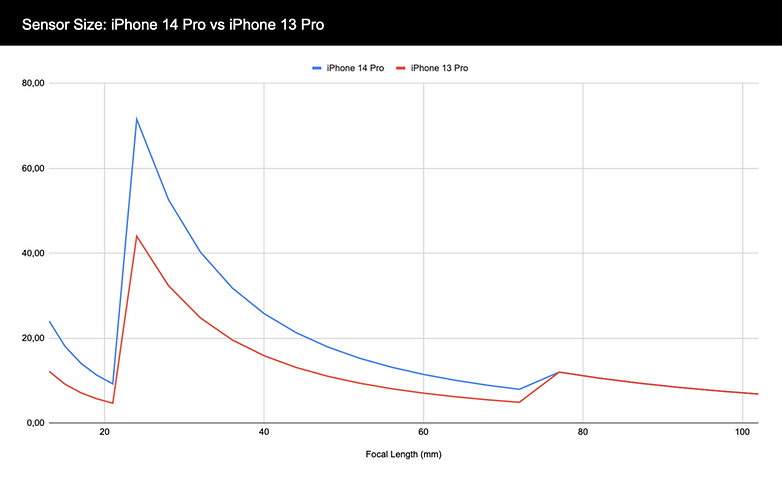

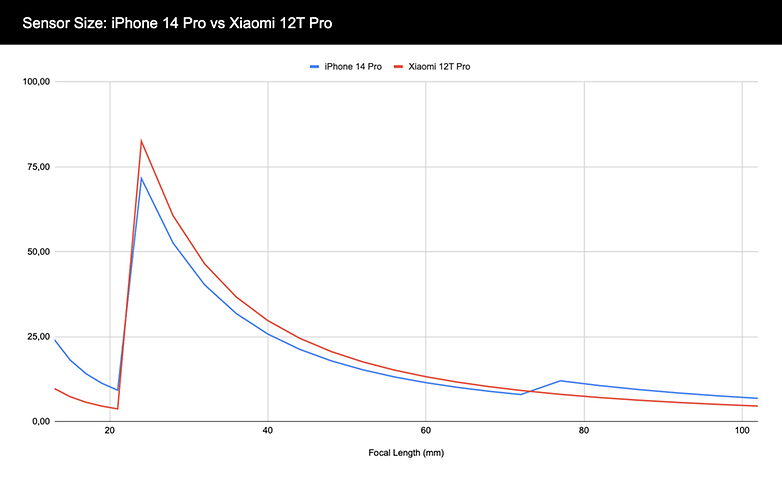

In the following chart, you can see how much sensor area is available for the camera in the iPhone 14 Pro and iPhone 13 Pro at different focal lengths. The ultra-wide angle (13 millimeters) begins on the far left of the edge. The main camera (24 millimeters) shows a significant increase. Up to the telephoto camera (77 millimeters), the iPhone 14 Pro always has more sensor area available than the iPhone 13 Pro. Finally, the telephoto camera remains identical.

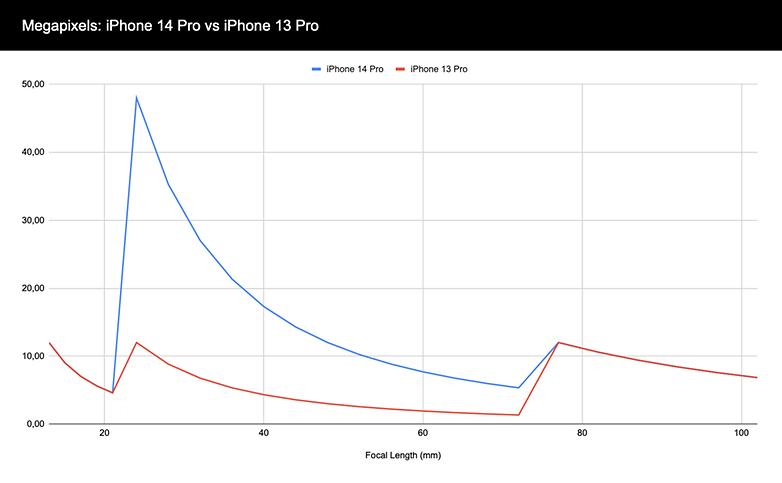

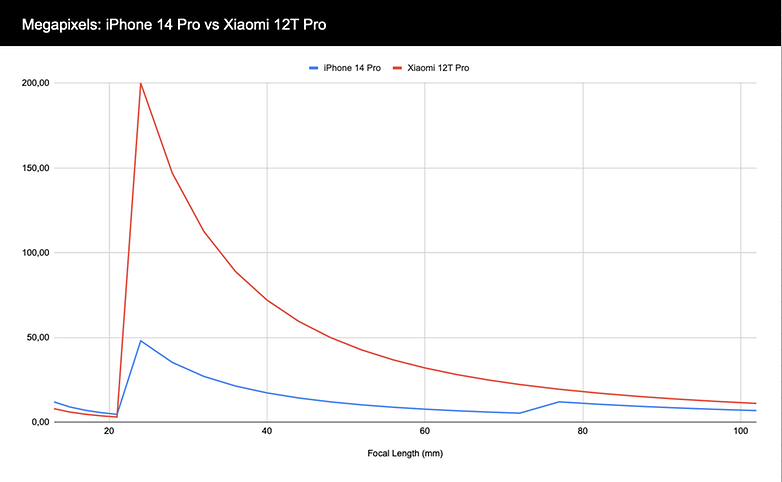

The chart above is also interesting, only with megapixels (MP) instead of the sensor area viewed on the vertical axis. While the progression is identical for the ultra-wide and telephoto sensors that are 12 MP each, the jump to 48 MP is clearly noticeable. The iPhone 14 Pro has significantly more resolution to play with in digital zoom between 1x and 3.2x.

How big and high-resolution does it have to be?

The iPhone 14 Pro discussed so far is not even the smartphone with the highest resolution or the largest sensor. This week, Xiaomi launched the 12T Pro, which offers a 200 MP sensor - and completely forgoes a telephoto camera in return. But how much more room for digital zooming do so many megapixels offer? Let's take a look at it in comparison with the iPhone 14 Pro:

However, the more important factor besides the resolution is the sensor area that is available to the camera at different focal lengths. The Isocell HP1 installed in the Xiaomi 12T Pro is considerably larger than the main camera in the iPhone 14 Pro with 1/1.22 inches, but it then loses out in terms of the available sensor area when the Apple smartphone switches to telephoto zoom:

And what about quad-bayer?

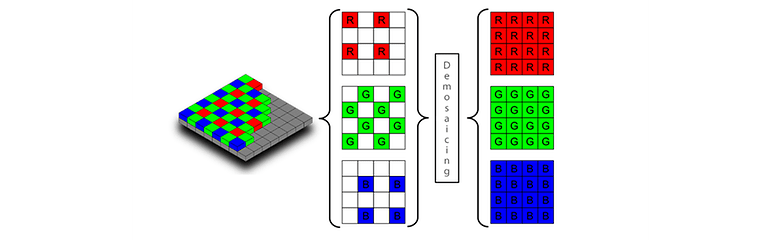

We have ignored one factor so far: the color masks above the sensor. In order to explain Quad-Bayer, we first have to look at how image sensors work. An image sensor consists of lots of small light sensors that only measure the amount of incident light without being able to distinguish colors. 12 MP means having 12 million such light sensors.

To turn this black-and-white sensor into a color sensor, a color mask is placed over the sensor to filter the incident light according to green, red or blue. The Bayer mask used in most image sensors always divides two by two pixels into two green pixels and one red and one blue pixel. A sensor with a resolution of 12 MP has therefore, six million green pixels and three million blue and red pixels each.

In demosaicing , or de-bayering , the image processing algorithms use the brightness values of the surrounding pixels of a different color to infer the RGB value of each pixel. A very bright green pixel surrounded by "dark" blue and red pixels thus becomes completely green. And a green pixel next to the completely exposed blue and red pixels becomes white. And so on, until we have an image with 12 million RGB pixels.

With higher resolution sensors, however, the color mask looks different. With the so-called quad-bayer sensor, typically in the 50 MP range, there are four brightness pixels under each red, green or blue pixel. The 108 MP sensors even group nine (3x3) pixels under one color area, and the 200 MP sensors have 16 (4x4) pixels. Sony calls this quad-bayer, while Samsung uses tetra-, nona- or tetra2pixel.

While the image sensors actually have a resolution of up to 200 MP in terms of brightness, the color mask stops at 12 MP. This is not a problem either, since in perception, the brightness resolution is more important than the color resolution. Nevertheless, at extremely high digital zooms, the color resolution eventually decreases to such an extent that image errors occur.

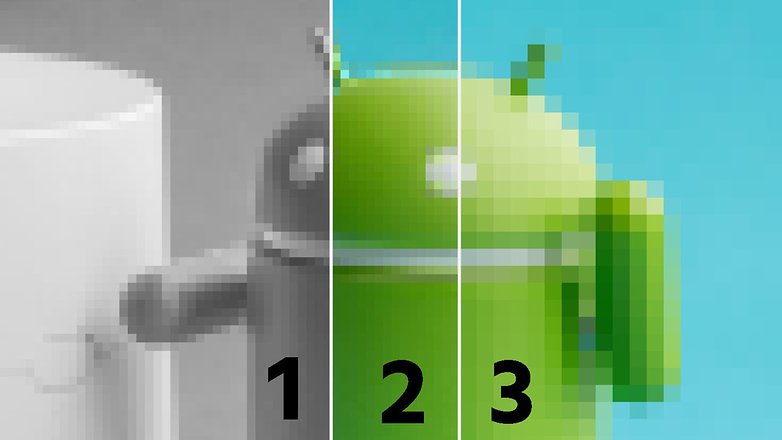

As an example, we have processed a photo of a small android here. On the left (1) you see a grayscale image, on the right (3) an RGB image with quartered resolution. The center image is a composite of both left and right images - and at first glance, the result looks very good. Upon closer inspection, however, the transition between green and blue at the top of the android is unclean.

And it is exactly at such transitions that artifacts occur when you zoom too far into a sensor where the color mask has a lower resolution than the sensor itself. By the way, Samsung probably used a 64 MP sensor with an RGB matrix that is untypical for this resolution for its much-maligned 1.1x telephoto sensor in the S20 Plus and S21 Plus for this exact reason.

The bottom line is, it is hard to tell purely from the hardware specifications just how the image quality behaves in cameras, especially since a very decisive role ultimately also goes to the manufacturer's algorithms. And the so-called re-mosaicing is also a major challenge for sensors with 2x2, 3x3, or 4x4 bayermasks. Unlike normal de-mosaicing, the color values have to be interpolated over ever larger sensor areas and with ever greater complexity.

On the other hand, the ever larger sensors also bring problems of their own. In order to keep the lenses compact, the manufacturers have to use lenses that refract the light more and more and thus run into problems with chromatic aberrations and other artifacts, especially at the edge of the image. And the shallow depth of field is also a problem at close range.

Hence it remains exciting, and I hope you found this journey into the world of extremely large and extremely high-resolution image sensors interesting. What does your dream camera look like in a smartphone? I look forward to your comments!

I consider it a fail.