Google I/O 2019: Google Lens gets new superpowers

Google Lens will be much more useful with the upcoming update. The Google service uses the camera in the smartphone to simultaneously translate, recommend the best dishes in the restaurant and even split the bill.

Google Lens is already available on many smartphones, but is much more useful due to its direct integration into search results, camera app and gallery. With the help of augmented reality functions, Google Lens will be able to display suitable search results directly in AR. On Google I/O, the team conjured a virtual great white shark onto the stage.

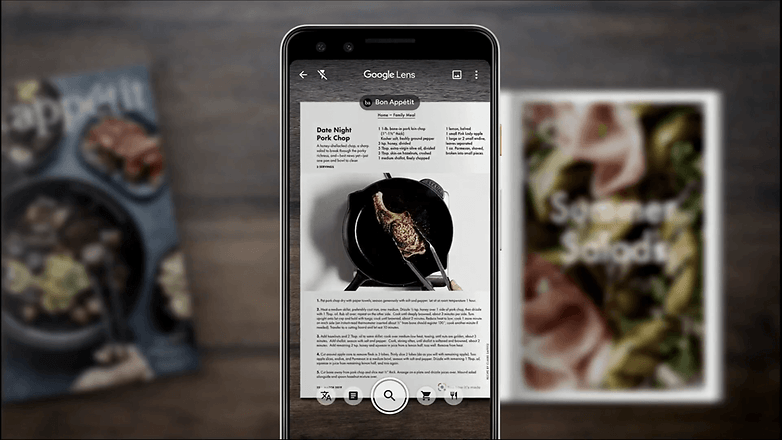

But AR is used for other things. The technology brings things to life in a museum, for example, if you point the camera at them, or shows the individual steps in a recipe - provided you have the right partners. Google will expand these partnerships over time.

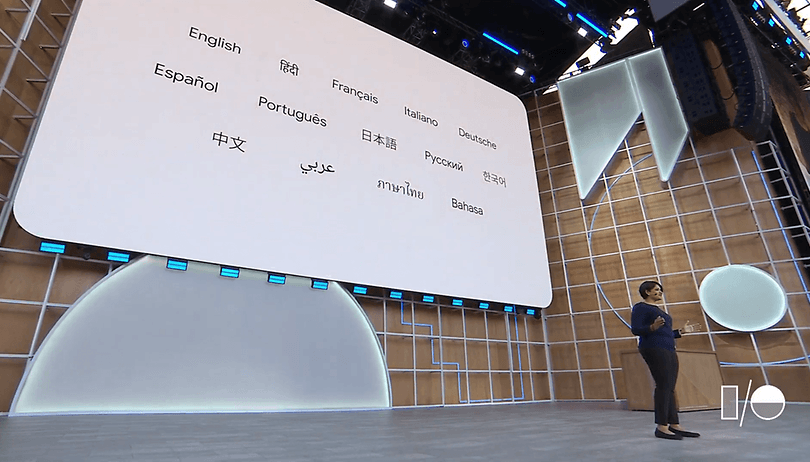

With Google Lens, you can not only translate text simultaneously, but also read it out directly in any supported language. You can either use already taken photos or start the translation directly in the viewfinder in real time. Particularly clever: If you use Google Lens to read the translation aloud, the word you just spoke is always highlighted. So you almost learn a new language on the side.

Google Lens calculates the tip

Google Lens is to do several things in the restaurant in the future. Point the camera at the menu and the app will show you which dishes are particularly popular. If you wish - and if Google users have given you their reviews - you can also have photos of the food shown to you directly before you order. When it comes to payment, Google Lens calculates the appropriate tip and also distributes the bill fairly among the friends.

The update for Google Lens, which makes the new features possible, is scheduled to be released next month. However, it is to be expected that not all new features will be available in all markets at the same time. Also, the functions are sometimes only really useful if a corresponding database of user ratings is available.