Is a 108 megapixel camera in Xiaomi Mi Note 10 really going to pay off?

It was exactly ten years ago that Canon showed its "Wonder Camera" at the World Exposition - a 100-megapixel concept camera for the age of computational photography. The Japanese company had 2030 in mind as the time frame for marketable implementation. Now only half the time has passed, and we have already reached the three-digit megapixel barrier with smartphones. But what does that actually mean - and are the 108 megapixels in the Xiaomi Mi Note 10 irrelevant or crazy impressive?

Image sensors have an image problem: megapixels are still the most important indicator of image quality - and are becoming more and more irrelevant with ever-smaller pixel sizes. Much more important is the sensor size. Because the larger an image sensor is, the more light it captures. And more light on the chip means - to put it simply - lower sensitivity, less error or noise during processing, and better image quality.

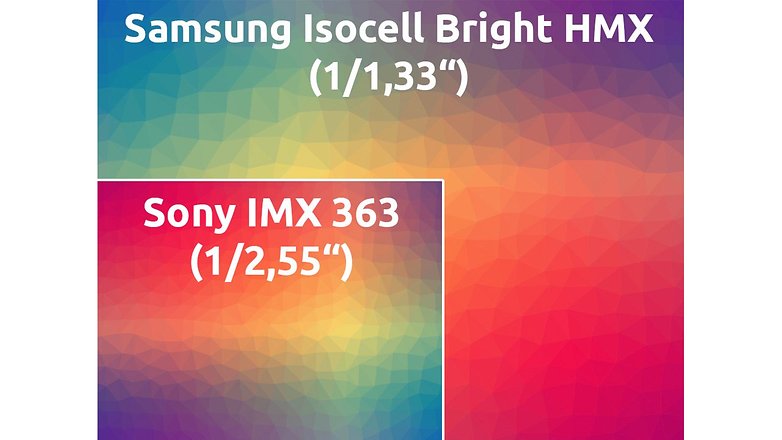

With the Samsung Isocell Bright HMX sensor installed in the Xiaomi Mi Note 10, sensor size is unfortunately in the background. That's a shame because the light-sensitive chip is really damn big with 1/1.3 inch. The less obvious inch number corresponds to an area of about 70 square millimeters. That's about three times bigger than Sony's IMX 363, which Google is using in Pixel 4 (XL), for example.

So far, so good. With the default setting, the Xiaomi Mi Note 10 shoots all photos at 27 megapixels, so it always combines four of the 0.8 micron small pixels into one 1.6 micron pixel. We already know this method well from the various 48-megapixel smartphones that snap with 12 megapixels as standard. The Isocell sensor developed jointly by Samsung and Xiaomi is called Tetracell Technology.

Why not use a 27-megapixel sensor from the start?

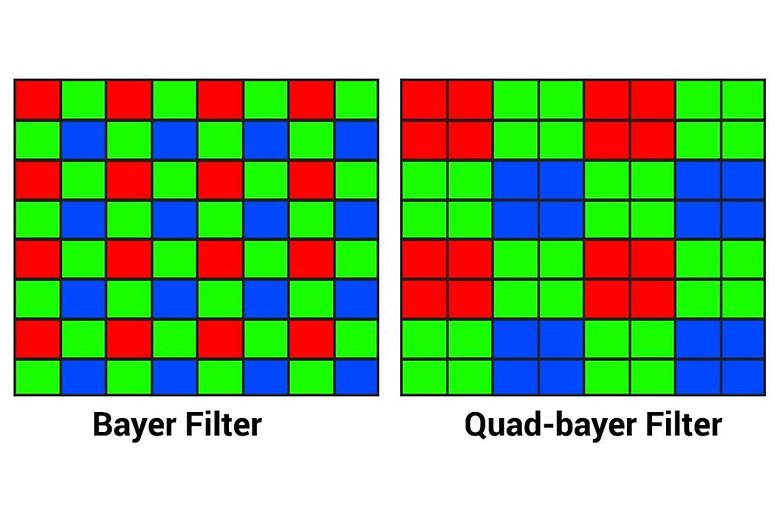

The trick of distilling from high to lower resolution has advantages, at least on paper. Virtually every current smartphone image sensor works with a so-called Bayer mask, which divides the pixels present on the sensor into green, red and blue pixels according to the ratio 2:1:1. Without this mask, every pixel on the chip would only measure brightness stupidly - we would have a black-and-white camera. The camera software then calculates the RGB color values of each pixel based on the surrounding pixels of a different color on the sensor.

The Bayer mask on the Tetracell sensor developed by Samsung and Xiaomi has - like Sony's so-called Quad-bayer sensors with 48 megapixels, by the way - no 108 megapixels, but only 27 megapixels - four pixels under each color filter. The advantage, however, is that at least the brightness values can be measured more granularly. And when it comes to perceiving image sharpness, brightness resolution plays a greater role than color resolution.

A lot helps a lot?

At least on paper, the resolution and especially the sensor size are an advantage over the competition. But it's like football: even with the best squad, you can lose a game if the software isn't right (just look at Bayern Munich). A test will show to what extent Xiaomi's software can put this hardware advantage into practice. Because 108 megapixels also mean a huge amount of data that you have to master first. In theory, a single 14-bit DNG RAW image can produce a proud 189 megabytes.

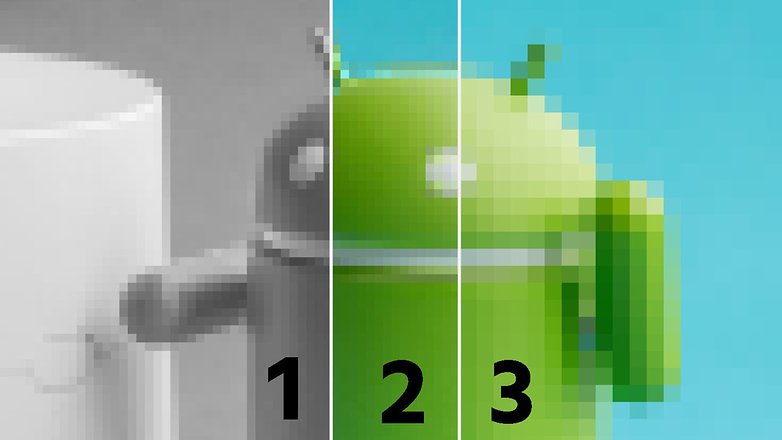

The question here is: is the time or the computing power installed in the smartphone already ripe for such large amounts of data? Or is Google's approach rather the right one for 2019 - with the almost two-year-old IMX 363 in the Pixel 4, with much less surface area and slim 12 megapixels instead, which allow the fast processing of an HDR image series with the current hardware?

Our in-depth test will show whether Xiaomi will end up in the way of the hardware itself - and if the proud 108-megapixel concept as predicted by Canon is ahead of its time and in 2019 or rather a case for the curiosity cabinet. Crazy new tech or irrelevant? I'm curious.

After an actually great article explaining how it works, I'm astonished by the equally ridiculous comments about the technology. technology evolves thanks to bright minds, not by considering it stupid or useless

-

Admin

Nov 7, 2019 Link to commentA real camera is what you need to take real photos.

You're right!

108 megapixel camera is just stupid and useless, all it does is create a massive file to eat up storage space. If you wanted to blow up a picture to billboard size it might be useful even then I doubt it, just a huge gimmick.