200MP, 3D & Mega Sensors: what to expect from smartphone cameras in 2020

Up to 200 megapixels, in-display cameras, 8K videos, and 3D: the next decade starts with spectacular promise for smartphone photography. We show you the most exciting upcoming trends.

Sensor area and resolution

In the outgoing decade, the 1/3-inch format was the standard for smartphone cameras for a long time. Towards the end of the decade, the chips suddenly grew in size - until Samsung finally pushed the legendary Nokia 808 Pureview with the Isocell Bright HMX.

Why is size so important?

Imagine the image sensor as a bucket with which you want to measure the amount of precipitation. The bigger the bucket, the more water it collects. Of course, we could also record the precipitation with a shot glass and then extrapolate it to a liter value per square meter. But the larger the vessel, the more accurate the result.

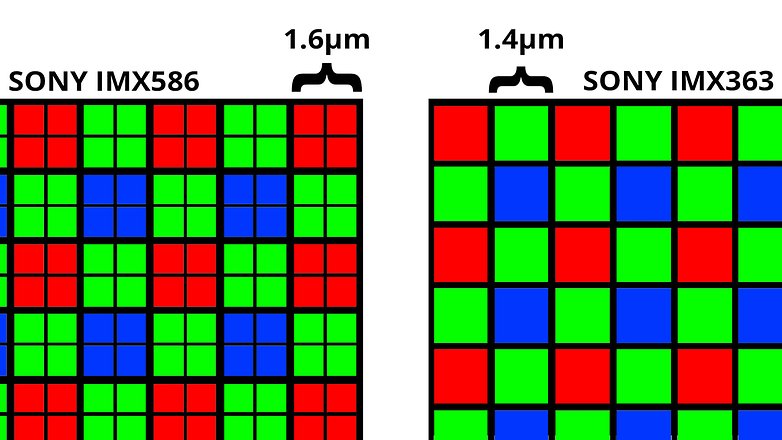

The Samsung Isocell Bright HMX with its 1/1.33 inch now offers about three to four times as much space as the Sony IMX363 in the Google Pixel 4 XL. However, the resolution of 108 megapixels is almost ten times as high - the individual pixels on the sensor are significantly smaller.

Not only Samsung but also Sony's relies on a Bayer mask called Tetracell (Samsung) or Quad-Bayer (Sony) with a factor of four lower resolution for its high-resolution sensors beyond 30 megapixels. The color resolution of the 108-megapixel sensor, for example, is 27 megapixels - at this resolution, the pixel size is computationally larger than that of the common 12-megapixel sensors.

Lots of resolution, lots of flexibility

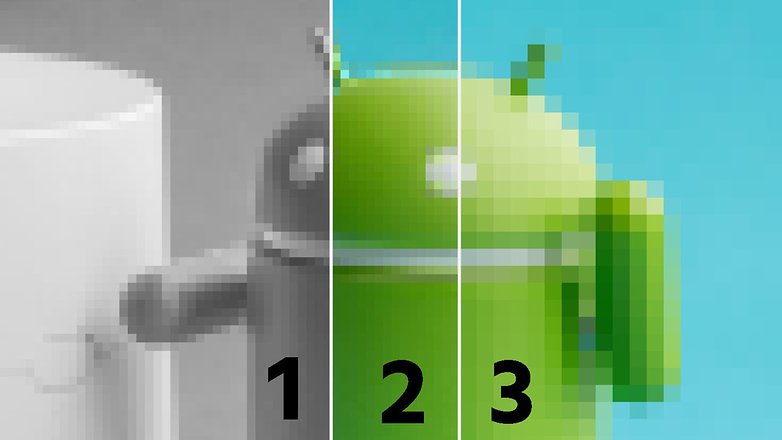

Why this trick now? This subdivision of the color pixels increases flexibility. Under ideal lighting conditions, the full resolution allows extremely fine details to be reproduced. The lower color resolution is much less important than the very high brightness resolution, see also the chroma subsampling common in video.

The sensors then extend the real advantages in difficult lighting conditions. With high-contrast subjects such as backlighting, both Sony and Samsung sensors allow the individual pixels to be exposed differently under a single color filter. This allows HDR photos to be taken from a single image - or HDR videos, for example.

Finally, in the dark, the large sensors show off their surface advantages, since more light can simply be collected under each color filter by combining the information from four subpixels at a time.

By the way: JPEG photos or MPEG videos have a standard color sub-sampling of 4:2:0. Put simply, this means that only one color information is stored on four brightness information anyway - as in the example image above.

In 2020 we will see many smartphones with extremely high-resolution sensors. Not for nothing does Qualcomm's freshly introduced Snapdragon 865 support cameras with up to 200 megapixels. And if we can believe the Americans, we will already see such a resolution in smartphones next year.

Even more than the 8K resolution promised by Qualcomm, I'm happy about the slow-motion improvements. The Snapdragon 865 allows the recording of 960 fps without annoying time limitation to a tiny fraction of a second.

Camera modules: a lot helps a lot!

In addition to the larger main sensors with more and more megapixels, more and more camera modules were found in the latest smartphones. A Penta camera like the recently tested Xiaomi Mi Note 10 will be the rule rather than the exception in 2020.

I hope, however, that camera modules will not simply be blown into the phones without any sense or reason in the coming year, just to have more lenses than the competition. Macro cameras or depth sensors with 2 megapixels with simultaneously available high-resolution main sensors and additional ultrawide-angle cameras and are superfluous in my opinion.

Breakthrough for ToF

There is another new type of sensor that will be in the spotlight next year: time-of-flight cameras take only a comparatively low-resolution image, but provide depth information for each pixel and thus generate a true 3D image.

With these depth details, it is now possible, for example, to generate more precise bokeh effects, crop objects or integrate any virtual things into photos. Infineon and Sony are working hard to produce time-of-flight sensors for smartphones - and according to the latest court reports, the next generation of iPhones with exactly such modules will support the main camera for AR applications.

Goodbye to the notch

From 2020 we can probably say "Adios" to a big trend of past years: the notch. At the beginning of 2019, Oppo introduced a camera that can take pictures through the display. At the beginning of December, the Chinese manufacturer presented a functioning prototype at an event in Shenzhen. In 2020 or 2021 at the latest, we can probably hope for the first smartphones with the corresponding camera.

Computational photography

We've touched on the subject several times in this article, but computational photography definitely deserves its own section for 2020. While computer-assisted photography has primarily appeared in recent years through HDR, multishot night modes and, most recently, Pixel 4 through astrophotography, next year the spectrum will be broader.

The beta of Adobe's camera app called Photoshop Camera gives an interesting outlook: In addition to the usual color filters, there are also filters that change the weather or turn day into night. And we will also see more and more advanced effects in the coming year that will make the eyes more radiant, the skin more beautiful or even make people younger à la FaceApp.

Are you excited about the future?

Which improvements do you look forward to the most, and what do you possibly fear? And what for you personally is the most important topic that has been totally neglected? I look forward to your opinion in the comments!

-

Admin

Jan 6, 2020 Link to commentI am still convinced that a real camera is much better and that without these expensive pseudo cameras the phones would be much cheaper

Gimmick after gimmick and the self-proclaimed expert that sell their souls for free phones. They all tell you how great and innovative it is total BS!!!