Poll of the Week: Are benchmarks important to you?

When it comes to reviewing mobile devices, nothing is able to replace the personal account and experience of those who review the device. This is the conclusion in our Poll of the Week on NextPit last week. Check out the results below.

On Friday, we asked our community about the relevance of benchmark tests in smartphone reviews, as well as which software is the one that they prefer to use in such reviews. It is not so surprising to see such results in general. As usual, the survey was shared across all four NextPit domains: France, and this one (International).

Benchmarks are not relevant among ourselves

Looking not only at the result of the quick polls but also at the comments of some readers in each domain, the weightage of these performance analysis software is on the decline. The reason does not lie in the credibility of the respective benchmark apps, but the optimization that manufacturers have done in the smartphones' operating system in order for them to achieve a better performance in such tests.

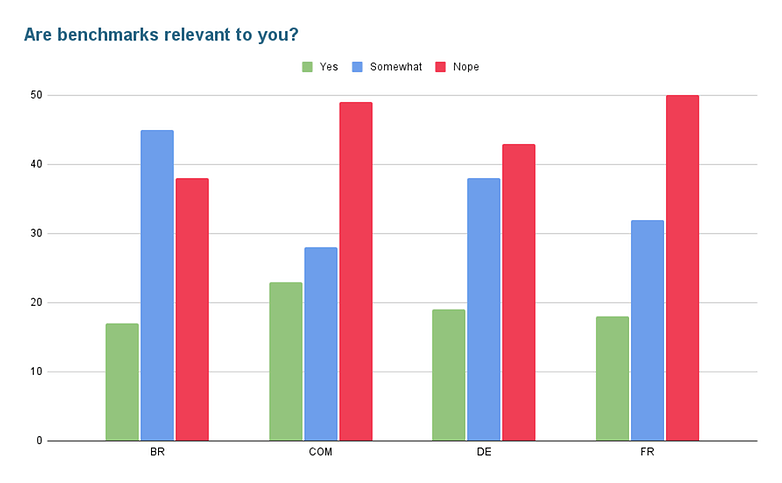

In NextPit's Brazilian community, 45% of people mentioned that they have some interest in using benchmark apps as a reference in smartphone reviews, while the rest of the domains have a majority that voted in favor of such benchmarks being not really relevant: Germany (43%), France (50%) and International (49%).

I wish companies wouldn't cheat on benchmarks in order to make them meaningful again.

Storm, international community

Beyond the controversies involving companies that cheat in benchmarks, some people have reported that because they don't understand the results presented crudely in reviews, such benchmarks don't make sense to them at all and ultimately, the results do not influence their final opinion of the smartphone.

A lot of times [benchmark results] are a series of numbers that I don't always understand, and I don't even try to understand, and I think that's the case for a lot of consumers.

Pierre Aubry, French community

AnTuTu still appears to be the most popular benchmark

| NextPit Community | Ranking |

|---|---|

| Brazil |

|

| Germany |

|

| France |

|

| International |

|

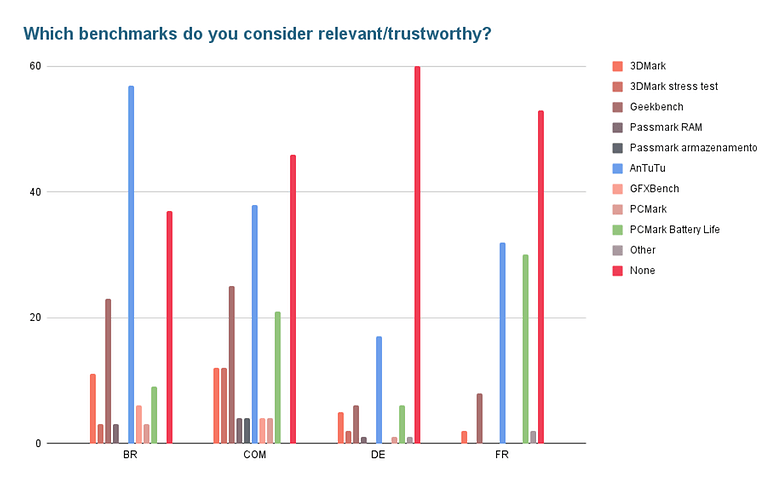

When it comes to which particular benchmark software is considered as the best or perhaps the most popular, there were very few variations within the different communities. In general, those who enjoy checking out benchmarks prefer the results presented by AnTuTu.

I use Antutu to know beyond the ranking, study which smartphone buy, honestly there are many people who are being cheated for not knowing certain things about smartphone. Bad or not, the ranking of the review app continues to be useful especially for those who want to make a good and smart decision.

Douglas, Brazilian community

However, following the trend seen in the first poll, most people claim they don't consider benchmarking software relevant, regardless of what they are: Germany (60%), France (53%) and International (46%). This option appears in second place in Brazil (37%).

Conclusion

As mentioned above, nothing replaces the personal account and experience of those who review a phone! However, as seen in a number of comments in our German community, despite the controversies or the use of this type of tool for marketing campaigns, benchmarks still have a relevant purpose when it comes to making hardware comparisons.

Apple's A15 Bionic, Google's Tensor, Samsung's Exynos, Qualcomm's Snapdragon, MediaTek's Dimensity and others offer distinct performances and there will always be curiosity about which is the most powerful processor (SoC) as part of the equation which cannot be avoided. However, the performance difference in day-to-day actions ends up being very small for processors in the same class, which doesn't really affect the overall experience with a different set of hardware. And this has clearly diminished public interest in benchmark results.

I don't care about benchmarks because they don't say much about actual performance in practice.

KuestenGlueck13, German community

Personally, I pay much more attention to the description of the user experience with a product as opposed to the benchmark results offered in a review. That's because it's much easier for me to relate the usage time of a device in a span of hours or days as opposed to scores presented in a table format.

As always, thank you very much for participating in our poll of the week, and a special thanks to the people who shared their opinions with our community! What did you think of the results? I'm curious to know your thoughts in the comments section below.

Original article:

In this week's poll, we bring to the NextPit community yet another intense, internal debate from the editorial team. What is the relevance of benchmarks for audiences who follow our site? Do the tools used to measure differences in real-world use between devices actually matter or is it just to have a relative idea on the different smartphones that are sold in the open market?

Controversies involving benchmarks abound, ranging from component manufacturers who cheat on tests, to models that switch off their internal controls in order to adjust power consumption and temperature when they detect benchmark apps, all of these help us understand why some smartphones simply overheat and crash during benchmarks.

- Related: The best phones you can buy in 2021

While the practice isn't new, and one of the most symbolic cases, the infamous "quack3.exe", recently turned 20 years old. Still, benchmarking tools are a constant presence in reviews and comparisons are made where processors, graphics cards, and, of course, smartphones and tablets are concerned.

All of which brings us to the first question:

Part of the popularity of benchmarks, including among your everyday consumers, is due to the ease of installation and execution without the need to define scripts to run apps as well as time their use. Of course, it also helps greatly that it is easy to compare the scores raked up with several publishing tools and even rankings via tests performed by the public.

Which benchmarks really matter

But for those who care about the numbers revealed by apps, which are the ones that really matter? With so many options available on the market, do you value any particular benchmark more when choosing a new smartphone or tablet?

Of course, benchmarks consist of only a small part of the reviews here at NextPit, but we do wonder if we should reduce (or increase) the amount of time spent performing tests and analyzing the scores. This is an even greater concern when considering how many of the apps on the market offer plenty of bang for your buck. After all, numerous devices basically use the same components underneath the hood, with slight variations that fall comfortably within the margin of error in performance benchmarks.

Feel free to weigh in, critique, and elaborate on your responses to this week's poll. Would you like to see an article with a general explanation of how each test we use reflects on device usage? Please use the comments field.

Original survey was written by Rubens Eishima.

For my personal use, they aren't particularly relevant because my game preferences aren't CPU/graphics intensive. I do like what the benchmarks are trying to measure and find it a useful synthetic comparison. The benchmarks that run a set of intensive real tasks is more meaningful than the full synthetics imho.

I wish companies wouldn't game them so that the benchmarks would then be meaningful again.