Facebook AI scientist: deep learning needs a new programming language

In recent years, the artificial intelligence field has seen massive advancements. However, according to Facebook's Chief AI Scientist, Yann LeCun, for the growth to continue the industry might need to focus on producing chips dedicated to deep learning, as well as a new more efficient programming language.

Artificial intelligence is much older than you might expect - it has been around for fifty years. LeCun has been part of that history. He worked at Bell Labs in the 1980s and developed ConvNet (CNN) - an AI with the ability to read ZIP codes. In his view, hardware innovation is inseparably tied to advancements in the deep learning field and we need to see even more progress in the future.

This is why, during his his keynote address at the 2019 International Solid-State Circuits Conference, LeCun addressed the need for more DL-specific hardware. The scientist thinks that demand for it will only increase in the future, thanks to "new architectural concepts such as dynamic networks, associative-memory structures, and sparse activations".

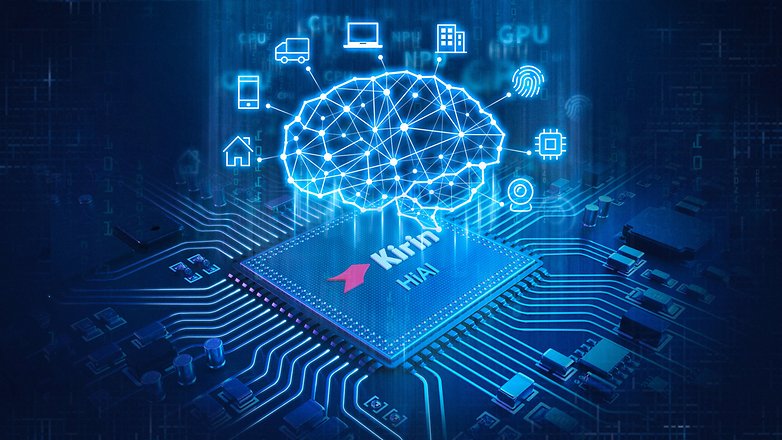

After a report from the Financial Times there also are rumors that Facebook might be working on an AI chip of its own: "Facebook has been known to build its hardware when required — build its own ASIC, for instance. If there's any stone unturned, we're going to work on it," LeCun said. However, there has been no official confirmation on the part of Facebook.

Finally, Yann LeCun also stressed that current programming languages might also be limiting to AI: “There are several projects at Google, Facebook, and other places to kind of design such a compiled language that can be efficient for deep learning, but it’s not clear at all that the community will follow, because people just want to use Python,” LeCun told VentureBeat.

Do you agree with Facebook's chief AI scientist? What do you think the future of AI holds? Let us know in the comments.

Source: Venture Beat, Facebook

The state of AI can be likened to this: A school teacher instructs students to take dictation. She says "I am going to read a book and everyone has to write down exactly what I say and nothing else. Furthermore, this is all we will ever do...

AI has needed a new programming language for decades. What is needed is the capability for the AI to read human-code and augment existing code within the syntax. All good human coders augment useful code and do it on a regular basis so why not start there?

Today a multitude of programming languages exists, and all serve their specific purpose in various fields. This paradigm of programming languages include four categories, Object oriented like C++, Java, The Imperative Paradigm includes FORTRAN and Pascal and are action oriented, Function based like LISP and ML, and Rule based like Prolog used in AI.

This development which has occurred in the last 7 decades, is a top to bottom movement or specialization of programming languages, incorporating more powerful syntax, better programming environment support, more features such as offering flexibility of approach and header files, and are translated into absolute machine code by powerful compilers (followed by machine specific assemblers) which are either self hoisted, native or cross compilers.

Whenever requirement for a more sophisticated and less obtrusive approach to writing human understandable instruction sets by programmers for machines was felt, a new programming language was realized.