Two weeks with the Xiaomi Mi Note 10, and camera test problems

Read in other languages:

First place with 121 points! But it's not only at DxOMark that the Xiaomi Mi Note 10 Pro and its sibling Mi Note 10 got great feedback for its camera. After two weeks traveling through Indonesia with the Mi Note 10 where more than 2000 photos and videos were taken, I have to say, unfortunately: I can't agree at all with the euphoric test reports. At the same time, problems that all tests are struggling with - including ours, of course - also become clear.

If you're wondering, the Mi Note 10 Pro and the Mi Note 10 have almost the same camera module. The Pro version that DxOMark tested has an 8P lens for its main camera, while the non-pro version only has a 7P lens. Once we get our hands on the Pro version, we'll do a direct comparison. I personally don't expect too much of a difference in terms of image quality.

It's quite clear: I took some very nice photos with the Xiaomi Mi Note 10. Some shots meant I didn't even remotely miss my DSLR 11,000 kilometers away. But, unfortunately, I couldn't rely on the fact that a good (or at least usable) image would end up in the storage when the shutter release button was pressed. After reading the first euphoric test reports with enthusiasm, I wasn't prepared in the slightest for three big problems when I took only our review sample of the Mi Note 10 on my trip instead of my DSLR camera.

Problem 1: Inconsistency

Whether white balance, autofocus, or exposure, the Xiaomi Mi Note 10 does not deliver reliable results. Perhaps only a few percent of the images may be affected, but some of them show image errors or are even unusable.

Some photos remind me of my beloved Nokia 7650 with their banding effects, while others have the autofocus clearly wrong - even in daylight and even in portrait mode, where the priorities for sharpness should be clearly distributed.

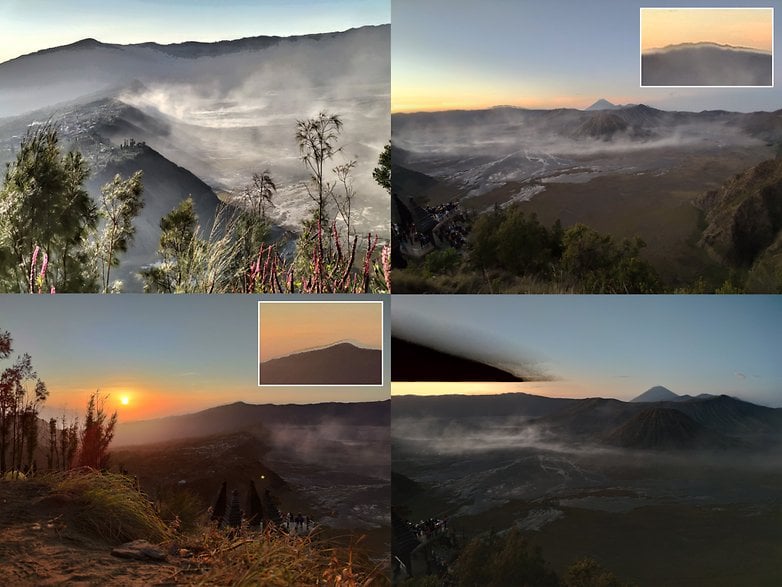

The biggest problems came with the HDR mode, which in some cases caused extreme ghosting. With moving subjects, the challenge, including the resulting problems, may be comprehensible. But a high-contrast horizon at sunrise must not cause such drastic errors as in the pictures below.

Problem 2: Performance

The camera app is way too slow. The built-in SoC is a Snapdragon 730G, which struggles quite a bit with the 108 megapixels. For example, it can take up to ten seconds for an image to be saved at full resolution.

But even when switching between the camera modes the Mi Note 10 allows itself far too many seconds to do its job. Over the past two weeks, I've looked all too often into incomprehensible faces because it took me so long to take a picture with my smartphone.

The camera app also froze up completely several times during the testing period and completely refused to cooperate until I restarted the device. Such problems were already mentioned during the product launch, and Xiaomi had promised an early software update to bring more stability. I hope that the manufacturer will get the image processing problems under control with the still-pending update.

Problem 3: Camera obstruction

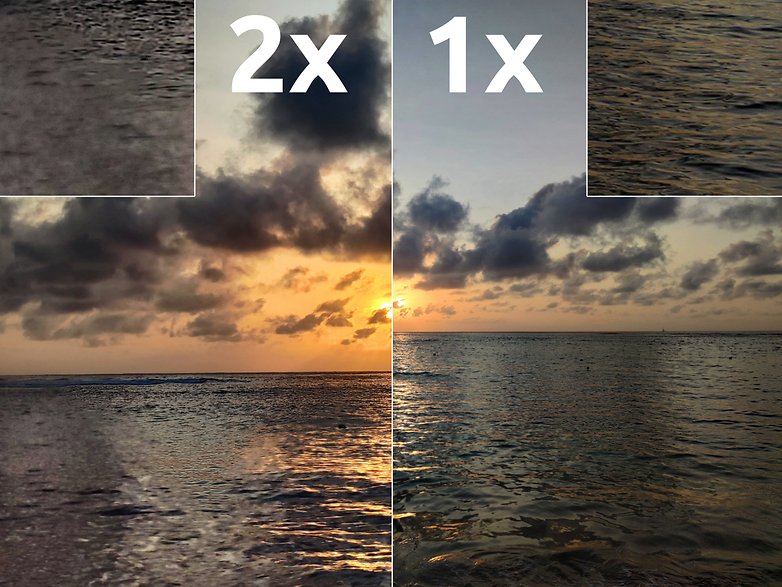

Last but not least, I find the camera setup itself really problematic. I'd like to take this opportunity to quibble over a counterexample: The iPhone 11 Pro has three cameras with 12 megapixels each. Each photo taken has a resolution of 12 megapixels. The difference between 0.9x and 1.0x magnification, for example, is relatively small when the magnification is not magnified.

With the Xiaomi Mi Note 10, there are now five cameras: one with 108 megapixels, one with 20, one with 12, one with 2, one and with 5 (or 8?) megapixels. Large differences can also be found in the sensor area of the individual modules - and in the image quality. Those who don't have the hardware configuration in mind will be surprised with very different image quality depending on the camera settings.

Are we testing smartphone cameras wrong?

Here you can find the very positive test on DxOMark mentioned at the beginning of this article, where the device is called the Xiaomi Mi CC9 Pro Premium Edition. After reading this and other tests, I would never have expected such blatant problems. However, I also doubt that another tester has had the opportunity to try the Mi Note 10 in such detail in so many different scenarios. That's totally understandable, after all, no magazine is going to finance a two-week camera test in Southeastern Asia for a single smartphone. I happened to be on vacation, so it was a lucky coincidence.

But do camera tests really make sense in which a few standard scenarios are snapped through? In which you photograph a few laboratory charts, in which afterwards software measures the S/N ratio or counts line pairs? Or where an editor drags the smartphone through his everyday life for a few days and shoots a few dozen photos? This is how we are testing cameras today, isn't it?

Now it's your turn: how should a camera test be structured so that it informs you in the best possible way about the photo qualities of a smartphone?

You'll find our very detailed test for the Xiaomi Mi Note 10 camera on AndroidPIT in the next few days - and don't worry, the problem photos shown here are more the exception than the rule. Until then, I'm really looking forward to your input on how we can improve our camera tests in the future.

My opinion about this phone... I don't know what to say because this phone is weird.

Positive points :

- The 108 mp in very strong natural light is very good, indeed.

- The dark mode is excellent.

- The screen is quite big.

- The battery life is very good.

Negative points :

- The HDR is horrible : colours and exposure are very bad.

- The camera, whatever the mode selected, is usable ONLY in very very strong natural sun light. Otherwise it is absolutely the worse I have ever seen in my whole life. The only phone I tested in my hands that was comparable was a very cheap phone (about 40 €)

- The picture are grainy.

- The pictures are blurred.

- The pictures are correctly exposed only in the middle, the boarders lack of light, are grainy and nothing can be done in post-treatment.

- The screen colours are really not the best. Many LCD are better, more accurate, more neutral.

- The processor is seriously not extraordinary. Some older procs are way better optimized.

I have the very last update. This phone price should be 180-200 €, not more !

My Mi Max 2 is millions years better. Screen, camera, battery, sensors, system stability.

If the very last update of my Mi Max 2 (a "block OTA) hadn't killed the phone I wouldn't have buy an other one.

I've had the phone for a month and maybe the software has been improved, but I really like it. Some of the images from the main camera are simply the best I've seen from a phone.

There are some issues with processing which show up from time to time, including occasional loss of detail in foliage or water and some highlight clipping in high-contrast scenes. I've even had a couple of images with green tint, like in this article. I hope that software updates fix those.

But the good definitely outweighs the bad and when the pictures are good, they are really, really good.

BTW, why shoot at 108mp? 108mp is mostly there for the curiosity factor, since the purpose of the large sensor is better realized in the 27mp standard main camera images, which are often great.

In your opinion what is the best camera smartphone out there?

Wow, I just bought it a few days back, and I absolutely agree with you. Dx0mark just overhyped this phone. My previous phone Mi 9 performance much better than the Mi Note 10. Hats off to you for writing my thoughts out on this phone, and the review DxOMark is big shame. Hope so the review it soon and put it somewhere around 110 points range.

-

Admin

Nov 29, 2019 Link to commentPhones are for communication, cameras are for taking photos.

These are both camera phones: their cameras are the first reason they sell. You can communicate very well with photos and videos. Don't forget: a picture is worth a thousand words...