The Sony paradox: how camera software could change everything

Ever wondered why Sony builds great image sensors, but the in-house smartphones always come with mediocre cameras? One Sony boss has been asking himself the same question! And he wants to change something about it. Is Sony on the right track?

Kenichiro Yoshida has been CEO of Sony since April 2018 (he was previously CFO) and has spoken about Sony's strategy in an interview with Wirtschaftswoche (Business Week) magazine in Germany this month. He wants to set the company on a new course and, in doing so, network the many corporate divisions more closely with each other.

Among other things, Wirtschaftswoche brought up the big dilemma Sony has been dealing with for a while: Sony produces outstanding image sensors that are used in smartphones of all the major manufacturers, but their own devices aren't really known for producing particularly good pictures. At least that's how you have to interpret the somewhat misleading question, Wirtschaftswoche asked why the best sensors aren't being used at Sony. But that's not correct - see Xperia XZ2 Premium, for example. It’s a curious situation, and Yoshida is also surprised by it.

Who, if not the Sony boss himself, can do anything about it? It has now been decided that the head of the imaging division will also monitor the development of smartphones. "Of course, we also have to bring our photo competence into our own products," the Sony boss told Wirtschaftswoche. He continued: "And I think we'll see results soon."

That could work, but Sony has a hard road ahead of it, for three main reasons.

1.) The hardware is pretty good already

If we look at the latest Xperia flagships, Sony has already set things in motion. The trend towards super slow motion videos, for example. This was only possible with a special Sony sensor. So Sony is on the right track - on the hardware side.

Because of reason number two, this is unfortunately not so decisive. Especially when we consider that smartphones are mainly used for snapshots.

2.) Computational photography is currently king

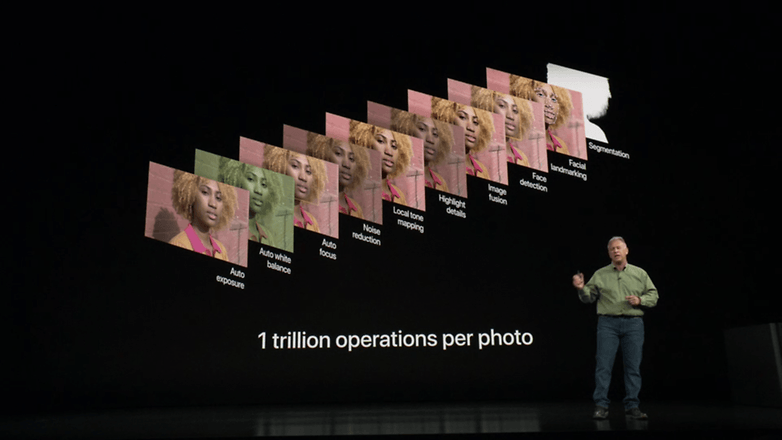

Google has set the bar high with its HDR+ technology. The software merges several images into one. The single original no longer exists, as many processing steps ensure the final image is of top quality. Apple has introduced a very similar system with Smart HDR. The software selects suitable frames from several differently exposed images and combines them in such a way that a single image is created at the end. Samsung has been using similar software processing since the Galaxy S8 (but hasn't really got it under control yet, if I'm allowed this dig).

Despite all the differences, Apple and Google have thrown the usual paradigm of one photo overboard. Smartphone cameras no longer rely on the best sensor hardware, but on the best post-processing software. The secret recipe for the best photos lies in the image processing of the sensor data. Want proof? Gladly! Google downgraded its sensor hardware during the jump from the first to the second Pixel phone, and the newer generation still shoots better pictures!

iPhones also contain such powerful computing chips because Apple needs them for photo editing. Allegedly, iPhones carry out about 1 trillion calculations per photo! By the way, the Neural Processing Unit (NPU) is partly used for this.

3.) Sony needs to make something clear to customers

One thing cannot be denied, photos with aggressive HDR such as Google's can quickly appear somewhat artificial. The question now is: Which way does Sony want to go? In order to improve the quality of Sony's smartphone cameras, one could take the HDR path from Google or Apple. But there are risks involved. Google worked on HDR+ for several years until the technology was first used in the Nexus 5X/6P - and since then HDR+ has been getting better every year.

That's why it doesn't sound very promising to go this way. Sony would have to catch up, and it would probably take several years. Not a good idea.

Instead, Sony's new smartphone boss has to pull off the trick of adapting the software of Sony’s large professional cameras to smaller smartphones. Even the large cameras use image processors that process the sensor data and save the optimized images as JPGs. RAWs, on the other hand, contain all sensor data but are also developed and optimized via software. And anyone who has ever seen the controls from Lightroom & Co. will admit that purism looks different.

We read in advertising brochures that Xperia smartphones are already using Sony's photo know-how, but that's not credible - at least not to the extent that Sony says. The fact that Sony boss Yoshida is promising quick results here gives us cause for hope: Perhaps we'll already see the first signs of this in the Xperia XZ3. But with the XZ4 at the latest, Sony needs to deliver.

High-quality camera software could well bring a kind of reboot for Sony smartphones. However, Sony also has to bring its customers up to speed on what Google and Apple do differently. It needs to communicate why bright colors aren't always the most important thing, and what advantages its own approach offers.

Whether Sony will go this way remains to be seen. I keep my fingers crossed, because the alternatives are buzzwords à la dual camera and Even-Smarter-HDR. But they don't solve Sony's paradox, they cause further downfall.

What do you think Sony will do to catch up to the camera top dogs?

Source: Wirtschaftswoche

Some people make mistakes but some companies make blunders.

a. Go the Sony way and not the other way (Bring back the XZ block designs with an edge to edge screen. No Notch nonsense.)

b. Get the headphone jack back and apologize to any customer that purchases the XZ3 (A company that sells high-end audio products which require headphone jack doesn't have the same in their phone...Genius)

c. Jack up the specs 8GB RAM and 512GB storage. 4K comes at a cost. Jack up the camera specs and have a uniform camera software across all phones. (e.g. push the camera as an app in the Android store)

d. Finally, Stop the 6-monthly cycle. Charge a premium for a "Sony" phone and have a 3 year/OS upgrade cycle.

All these problems are obviated if OEMs will simply fully implement Android's Camera2 API and ensure their hardware (image sensor, other relevant sensors, and lenses) are compatible - then let their own devs work on stock camera apps, but open up to third party development. There are several good alternative ports of Google's HDR+ but they require compliant hardware and the Camera2 API. Meanwhile the three apps I regularly use on my aging Lollipop phone (Camera FV-5, Snap Camera HDR, and Bacon Camera) have not been updated in nearly a year.

I have to suspect the dev paralysis is because of all the too many partially compliant and sensor / lens-crazy hardware configurations filling the summer air like ragweed. Users are increasingly stuck with the OEM's stock app, however good or lousy that app may be, which they never were three years ago. The OEMs will each decide whether to emphasize point/click algorithmic conformity or manual settings for user creativity or giddy fun filter bundles, but there's no reason developers shouldn't be freed up for other approaches with full Camera2 compliance. I put control of the Camera API right up with control of Android security and version updates as a serious systemic problem that can only be solved by Google taking full control of the operating system, as Microsoft does for multiple OEMs and Apple does for itself.

No, we don't need huge sensors. We need even better processing and Google knows how to do it. If even camera users start to think their cameras would be even much better having a processing power similar to Googles HDR+, then that's the way to go. How quickly can you pull out your DSLR or Mirrorless on the top of "the Shard" and just click and take a PERFECT shot of the city during the night? You can't. That's because NOBODY can better process at an incredibile speed a picture like Google. I don't care of the occasional noise in a corner of the photo but i can see the bricks and windows of St. Pauls Cathedral. But i do care if that picture is blurred or just not good because it was on AUTO on that Canon or Nikon. So Sony doesn't need huge sensors, then the phone would be huge and still need amazing processing algorithm...

You want better pictures from a smartphone? Stop with all the goofy tricks, and install LARGER image sensors and real glass on the back. 2-5x OPTICAL retracting zoom lens. But with the stylish set, don't look for that to happen. Some of these "miracle" camera smartphone photos look a bit overprocessed.