Beautiful blur for smartphone portraits: How bokeh effect works

For decades, the only way to achieve atmospheric portraits with a beautiful bokeh effect was with a large image sensor and lenses with large focal lengths. Over the past several years, smartphones have learned to create a background blur effect via software. In this article, we will explain what bokeh is, how smartphones emulate this effect and the different approaches that exist for this.

About focus and blurriness in cameras

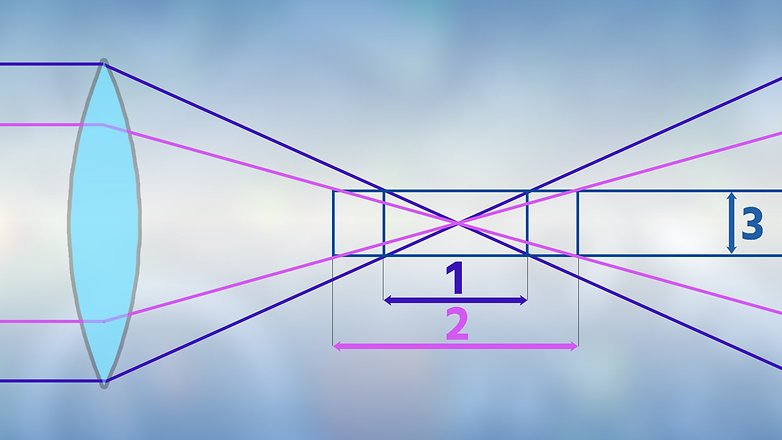

I would first like to start by explaining the concept of focus and blurriness and, to that end, consider a lens as one individual lens for simplicity’s sake. The greater the diameter of this lens, the quicker the rays drift apart in the beam path of the following graphic. As a result, objects outside of focus are no longer depicted in a punctiform manner but rather as an increasingly bigger circle as the lens diameter increases. As soon as one of these circles becomes greater on the sensor than a single pixel, the picture section in question is no longer in focus.

Apart from the lens’ diameter, the lens’ focal length also plays a role. The greater the focal length, the more selective the focus. What you may know as e.g. 24 or 26 millimeters from the datasheet is not the true focal length of the lenses; the 35-mm equivalent focal length is what’s specified here. It describes the focal length that the lens on a camera with a 36 x 24-millimeter sensor would need to have in order to achieve the same image angle as the smartphone’s lens-sensor combination. The real focal lengths of a smartphone’s wide-angle lenses actually range from 4.0 to 4.5 millimeters.

One thing is clear due to the mini-lens and the tiny focal lengths: The depth of field is always huge on smartphones, and it also doesn’t help that smartphones have a smaller circle of confusion than single-lens reflex cameras due to their tiny pixels. I have deliberately left out one influencing factor on the depth of field: the distance from the subject – the closer the distance, the more selective the depth of field. Most of you will have observed the effect on macro photos with a smartphone.

A lot of CPU horsepower instead of a large lens

However, one thing that smartphones have to offer is a lot of computing power and/or other physical means of reproducing the effects via image processing. Generally speaking, it always works by having the camera distinguish between the foreground and background and then specifically blur the background. While doing so, the clearer the subject and background are separated from each other, the more impressive the effect.

Some flaws inherent in this process include protruding hairs that are blurred together with the background. Even glass presents a constant problem for bokeh functions: In most cases, the eyeglass lenses of the portrayed person are included in the foreground, but the background visible through the glasses is not blurred, which would have occurred in a visual bokeh effect.

These smartphone experiments with depth of field are not entirely new. The HTC One (M8) and even various Nokia Windows Phone devices (R.I.P.) brought the bokeh effect to pictures. However, neither the picture quality nor the processing speed was good enough and as a result, it ultimately did not manage to catch on until the past two years.

As is the case today as well, different approaches were used in the aforementioned HTC One (M8) and Nokia devices, both of which we can still find in a very similar form in today’s smartphones. We would like to touch upon the differences and their pros and cons in the paragraphs below. Apart from Sony, LG and HTC—excluding the One (M8)—all large manufacturers now offer devices with bokeh functionality.

Dual camera for bokeh effects

Like the HTC One (M8), which pioneered the dual camera, many smartphones today also use a dual camera to calculate a depth map of the scene being photographed. Similar to our brain and two eyes, the smartphone uses the offset of both lenses. The software uses soft focus on the parts of the image that are identified as the background.

Identical focal lengths

The dual cameras of Huawei, Honor, Nokia and Motorola use identical focal lengths for each of the two sensors, making bokeh functionality available for wide-angle photos. On the cheaper dual-camera models, such as the Honor 7X, the second camera has a mere resolution of 2 megapixels, so its only purpose is to create a depth map.

On the other hand, flagships have an additional purpose for the camera: For instance, on the Huawei Mate 10 Pro, the second sensor takes high-resolution black-and-white photos to supplement the rear camera’s RGB shots with additional brightness information. Its purpose is to improve image quality, particularly when using digital zoom. On the other hand, the second camera on the OnePlus 5T is intended to provide assistance when taking nighttime shots.

Different focal lengths

Other models, among them the Asus Zenfone 4, the iPhones or the OnePlus 5, use different focal lengths in both lenses. As a result, the bokeh function is not available in wide-angle mode, since the tele-module does not allow for a second view for creating the depth map for the entire image. However, it works the other way around: An image extract from the wide-angle camera helps create a depth map for a telephoto.

This limitation may not be dire in practice. In any case, the tele-module’s longer focal lengths particularly deliver nicer results for portrait photos. One special case is the Galaxy Note 8: Its wide-angle sensor namely offers dual-pixel autofocus, which makes the bokeh effect possible without a second camera—at least in theory, but we’ll elaborate more on that in a bit.

Exceptional cases

There are also sporadic experiments with more elaborate camera systems where a depth map is not calculated from the offset of both lenses. For instance, the iPhone X’s front camera projects an infrared dot pattern onto the surroundings, allowing it to not only detect the user’s face but also cleanly crop it out from the surroundings. Huawei is working on a very similar system.

The Asus Zenfone AR and the Lenovo Phab 2 Pro, each of which have a time-of-flight camera integrated below the rear camera, take it a step further. In the process, they scan the room with an infrared laser, which once again provides increased range. This technology, promoted by Google under Project Tango, has not managed to gain a foothold so far. Elsewhere, we already made an extensive report on Time-of-flight cameras and Project Tango.

Bokeh effects with just one lens

You are certainly familiar with the effect: Close one eye and your depth perception only works to a limited degree. So, how do smartphones with only one lens manage to distinguish between the foreground and background?

There used to be an app called Refocus for the aforementioned Windows Phones. Here, the smartphones simply took photos with different focus points and, with the tap of a finger, the user could choose which areas to focus on. Although it worked very well, it was very slow and thus, only practical to a limited extent. However, hardware and software are more advanced nowadays, which is why it works, even without any focus bracketing.

The Google Pixel 2 offers the bokeh effect with one single lens. One feature of the IMX362 image sensor is helpful here, namely the dual-pixel autofocus. It divides every single pixel on the sensor into two halves and, like on dual cameras, this process makes it possible to generate two slightly shifted images. However, the technological implementation is more complex than with the two individual sensors, since the offset is not in the centimeter range, but rather equal to half of the lens diameter. The actual pixel generation process here combines a complete set of photos from the depth map and furthermore uses AI-supported image analysis.

For instance, on its Galaxy S8 and S8+, Samsung also uses an image sensor with dual-pixel autofocus (the Sony IMX333), but has so far foregone true bokeh functionality. Selective focus only works for macro shots and without any input from the user. However, the upcoming Oreo update is slated to give the Samsung Duo a true bokeh mode. Other smartphones equipped with dual-pixel autofocus, such as the HTC U11 or the Moto G5 Plus, currently do not have any bokeh functionality, although a software update can also provide them with it.

What do you guys think?

In conclusion, there are many different ways to take atmospheric background blurs, even with small image sensors and the mini-lenses found in smartphone cameras. Which approach would you like for your next smartphone? Let’s discuss the pros and cons in the comments below!

"a large image sensor and lenses with large focal lengths" - you're forgetting aperture. Nice article though.

Very interesting topic.

bokka shmokka