AI in the arts: can a computer really be creative?

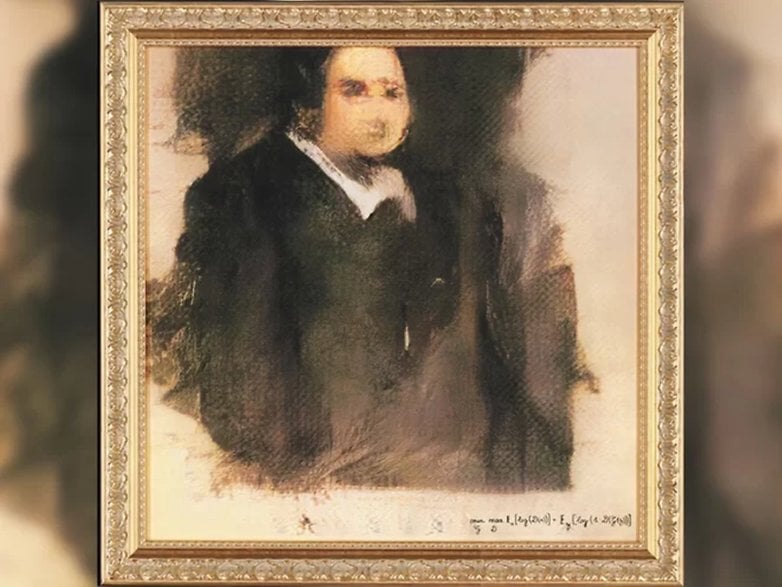

When a painting, Portrait of Edmond Belamy, sold for $432,000 in October last year, it sparked a debate that is still rumbling today. The reason was not the lofty price, which was significantly higher than estimates, it was the identity of the artist. Can a computer really create art?

Portrait of Edmond Belamy was created by a Paris-based art collective called Obvious. Hugo Caselles-Dupré, Pierre Fautrel and Gauthier Vernier, the trio behind Obvious, say the painting was created by artificial intelligence.

The project raised a lot of questions about artificial intelligence and creativity. The debate feels a little like the copyright dispute that followed the infamous ‘monkey selfie’ from 2011, when a Celebes crested macaque took equipment belonging to the British nature photographer, David Slate, pointed the lens at its own face, and shot a picture. PETA argued that the macaque owned the copyright to the photograph, much to the bemusement of Slate.

The photographs were in the public domain, having been published by several British newspapers after Slate licensed images to the Caters News Agency and, as they were the work of a non-human animal, the online repository of free-use images, Wikimedia Commons, claimed there was no human author in whom copyright is vested.

Today, I am getting the sense that we are heading down the same road with AI in the art realm. If an intelligent machine creates a painting, or a song, or a poem, or a sculpture, who do we credit the creation to? And if the answer is unclear, what is the art world going to do without recognized artists?

Who’s the real artist, the computer or the humans that programmed it?

Portrait of Edmond Belamy was created by an algorithm composed of two parts. We already know that algorithms are good forgers, and can create a painting or a piece of music in the style of an artist it has ‘studied’ in detail. By feeding 15,000 portraits painted between the 14th century and the 20th century, the algorithm was able to produce a portrait in a similar style. Amadeus Code, the AI tool for songwriting, works on the same principle. This is known as the Generator side of the algorithm.

The second side, known as the Discriminator, acts as a checker. It tries to spot the difference between the real deal - detailed copies of portraits originally painted by humans - and new creations produced by the Generator side. The goal of the Generator is to fool the Discriminator. The Generator learns from its mistakes as the Discriminator makes correct guesses. The idea is that by teaching the forger to trick the detective, you force it to become more creative.

This method is called generative adversarial network, or GAN. It was created by Ian Goodfellow in 2014, and has sparked excitement among those working in the field of machine learning. GAN has been cited as a way to give machines an “imagination”, or something similar to what the term is used to describe in humans. But does it count as true creativity? Does GAN allow a machine to become an artist?

A top-down versus bottom-up approach

You may have heard of the top-down and bottom-up designs that are popular in thinking, teaching, or leadership structures. Both are systems of information processing and can be used to help us understand what is happening when an algorithm or AI is solving problems.

The top-down approach, sometimes referred to as logical reasoning, is where a programmer feeds information and data into a computer using analytical logic, having already figured out the approach needed to solve the problem or the task at hand. The computer then crunches through a load of algorithms to come up with an end result.

The bottom-up approach is more about creating a system that is greater than the sum of its parts - by grouping together simpler systems or algorithms that, when combined, create much more complex strategies. It's less about following the rules to find an answer and more about combining lots of simpler processes to collectively create complex ideas. If an AI is ever going to be truly creative, it is going to need to use a bottom-up approach.

If GAN allows AI to use a bottom-up approach, does that make it creative?

There is no doubt that with new techniques and advancements in machine learning, we are closer to creative computers than we have ever been before, but the fundamental question remains: did the computer create the piece of art, or did humans train it to create the art?

One of the other difficulties in talking about the role of AI in art is the definition of art itself. Before we get start getting all Marcel Duchamp’s toilet, can we agree that all genuine art requires an originality aspect to it? You could train a person (or computer) to create paintings in the style of Pablo Picasso, or songs in the style of Nirvana, but that is different from creating something completely original.

The other problem I have with labeling this type of AI as ‘creative’ is the human input aspect. The machine that created Portrait of Edmond Belamy did so because it was instructed to do so by humans. For machines to be classed as creative, we need to arrive at a situation where the AI is creating for sake of expression, not on instruction.

It sounds insane - the idea that a computer might just fancy painting or writing or composing original art, but that is where we need to get to, in my opinion, before we can start classifying AI as ‘the artist’ behind a piece of work.

What do you think? Will we ever reach a stage where we can confidently call computers creative? Will we see a famous AI artist one day? Share our thoughts in the comments.

From a theoretical point of view, a computer can have unlimited computing power and unlimited storage space. Obviously they are far outpacing the human processing power.

The computer processes information based on algorithms designed by people, and it is also possible to extend these algorithms through the computer's own forces, becoming an expert system able to learn from their own rules.

But with all this immense computing power, an element will still not be quantifiable and can not be put into the form of an algorithm - and that is how I refer to "imagination", to creativity, to intuition, these being elements specific to the domain human. No matter how many paintings a computer would produce, and in any combination of colors, it would still not be able to reach the human artistic subtlety.

The computer is a perfect computing tool, a helper for people, and so it must stay!

Your argument essentially boils down to the "no true Scottsman fallacy" (look it up on Wikipedia). If it's not "art for art's sake" then it's not art. People make unoriginal art for pay at the direction of other ( typically non-creative) people every day and we still call it art. They don't have the freedom to chose the content or form, they can't even choose not to create it or they lose their job. The biggest difference between these people and AI is that the people took longer to train.

Examples include the photos you see in magazines and newspapers, all the images that go with linkbait headlines in online advertisements and quite a lot of company logos and signs. Writers have been churning out work they're not proud of and that isn't creative because they get paid by the word. So are none of them artists? Is none of that art?

For myself, for a thing to be art it needs to inspire creative thought about a topic. Sometimes the art itself is in the perceiver's reaction (some dada was this way as is some performance art), sometimes it is the thing. Sometimes we think about the piece or about the world or about ourselves. Sometimes we don't care and just want a pleasing object to decorate a room. That's art too. Does looking at an image or shape when you don't know how it was created or by whom inspire creative thought? If so, then that image or shape is art. Telling me who the artist was or was not doesn't change that.