Benchmarks: an objective performance score, not the full story

In the always changing world of technology, you need some kind of yardstick to compare performance, since reading technical specifications is never enough. That’s why benchmarks were created. But why and how do we use them? Can we consider them reliable?

Shortcuts:

What are these benchmarks we often hear about?

Benchmarks are programs designed to test CPUs, GPUs, memories, or virtually any other aspect of a device that can be measured with a score, time, speed, etc. These are the tests carried out by companies such as DxOMark for the evaluation of camera focus times and speed tests for the speed of an internet network, which also fall into the category of benchmarks.

Benchmarks can be categorized into two types:

- Synthetic benchmarks

- Real world benchmarks

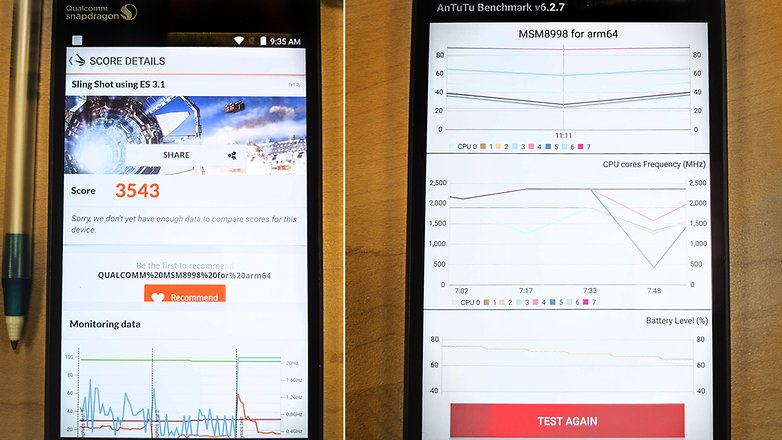

The first (and more common) type simulate an extreme workload and put the components into action at 100% of their capacity. This allows you to evaluate the maximum power of a device.

Real world benchmarks simulate realistic (though still very demanding) workloads and are more useful in assess a product’s true capabilities for more specific purposes (like apps).

What is the purpose of these benchmarks?

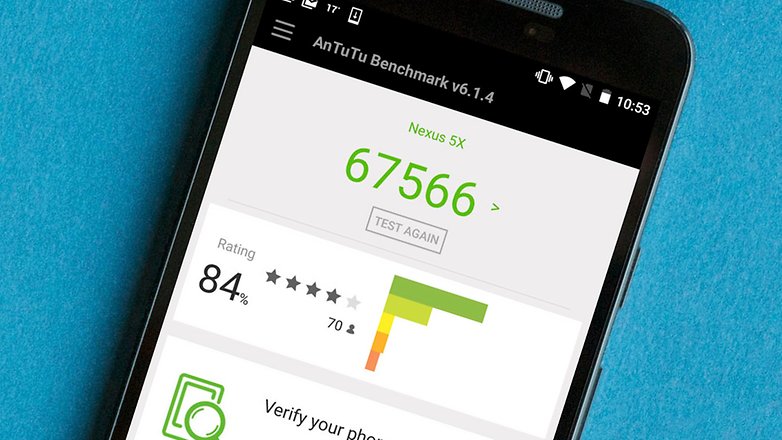

Benchmark apps (or PC programs) are designed for a specific purpose: to measure the performance of the device on which they are executed in order to compare it to other reference devices.

As the complexity of electronic devices increases, it is increasingly difficult to compare their performance by simply looking at specs. That’s why new performance measurement programs are always being created.

Can we trust them?

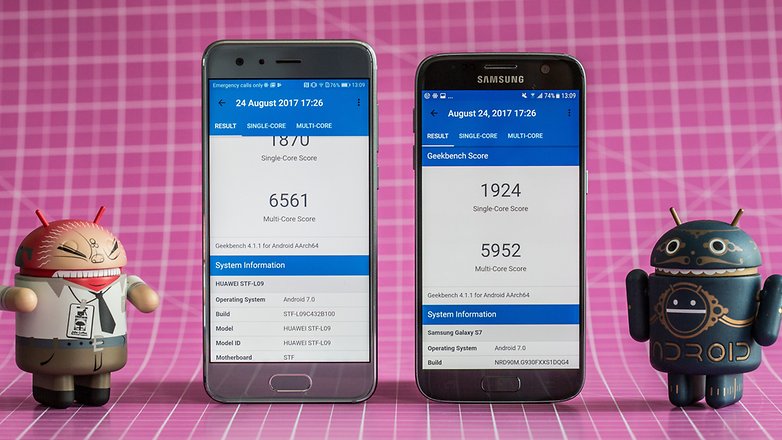

In theory, yes. The scores calculated by the benchmarks are always obtained by the same operations on each machine, so each device is given the same treatment. What you need to know, however, is the state of the device before performing the test.

Here’s a practical example: if apps are running in the background on a smartphone during the test, the result will obviously be different in comparison to a second smartphone running the test program without other apps open. This is because some of the tested device’s resources may be in use by other programs or apps, which affects the final result.

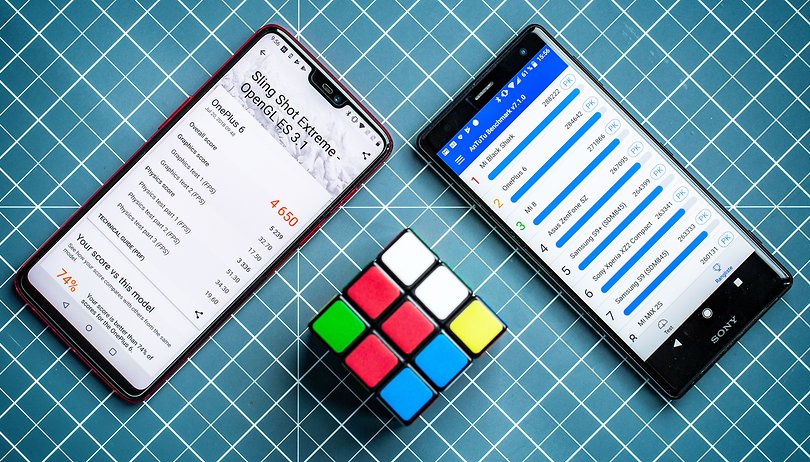

Sometimes, however, manufacturers have been caught cheating on these benchmark tests. Most recently, OnePlus was found to increase the performance of its smartphones when the most popular and used Android benchmarks were running on their devices. By recognizing the app that is being run, certain manufacturers can implement specific procedures to allow the hardware to perform better and achieve higher scores. By knowing how these tests work, you can optimize your product to get higher scores on benchmarks, but this doesn’t always translate into better performance in real life.

Take the results with a grain of salt

Benchmarks are only indicative and useful if all devices used for comparison are treated in the same way. If all tests are carried out under the same conditions, it can be assessed which product is objectively better, at least on paper. Benchmarks can easily be made up, and may not reflect the use of a device in real life.

Sometimes, some smartphones can achieve amazing benchmark results, but fail to deliver an acceptable user experience. Just think of Samsung’s Galaxy S range, which has the highest level of hardware, but sometimes isn’t as fluid and responsive as smartphones with lower performance on paper, such as Google’s Pixel smartphones.

Do you rely on benchmarks when choosing your tech purchases?